Running Loops at Midnight

Six months of AI changed everything. The people building it haven't changed at all.

Six months ago, I wrote that our greatest tool is still each other. I was right. But the world around that truth looks nothing like it did.

George Hotz published a blog post this week with the title “Every minute you aren’t running 69 agents, you are falling behind.” If you only read the headline, you’d think it was another entry in the LinkedIn anxiety machine. The endless scroll of posts telling you that if you’re not using the latest AI tool, you’re already obsolete.

But geohot’s actual argument is the opposite. The title is satire. His point: the pressure is manufactured. AI is “just search and optimization” with inherent computational limits. And the path forward isn’t panic. It’s creating more value than you consume.

I agree with him. But I also think something genuinely important happened in the six months since I wrote In an Age of AI, Our Greatest Tool is Still Each Other. Not the kind of important that LinkedIn influencers want you to believe. Not “you’re falling behind” important. The kind of important that only becomes visible when you stop scrolling and start building.

The Six Months That Changed Everything

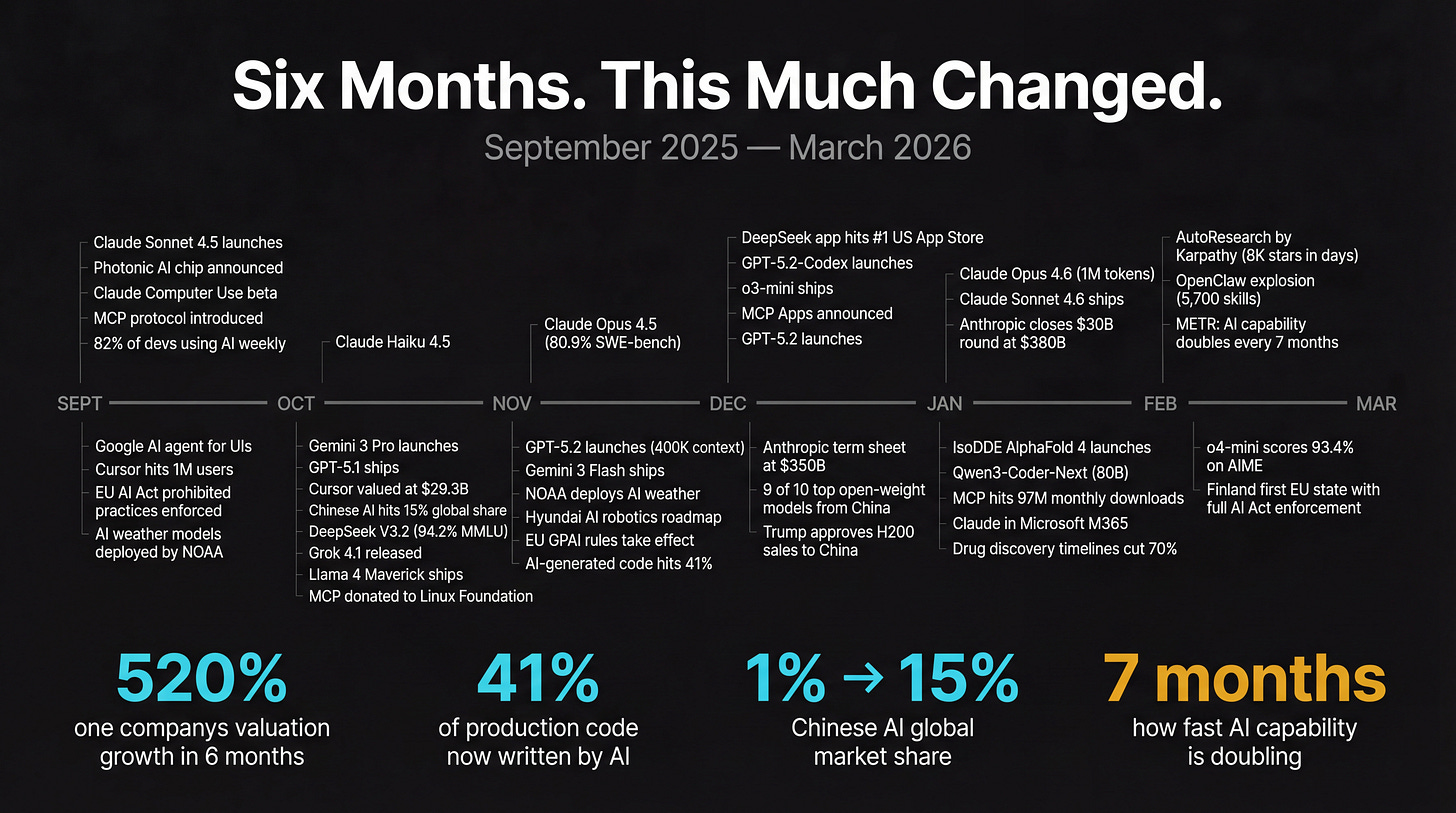

Let me just lay out what happened between September 2025 and March 2026. Because when you see it compressed into a list, the velocity is staggering.

Claude went from Sonnet 4.5 to Opus 4.6, with context windows expanding to one million tokens. GPT moved through 5.0, 5.1, and 5.2, plus a specialized Codex variant. Google shipped Gemini 3. DeepSeek released models that matched US proprietary APIs at a fraction of the cost, and their app hit #1 on the US App Store in January. Nine of the top ten open-weight models globally now come from China.

Anthropic’s valuation went from $61.5 billion to $380 billion. Cursor, a coding editor most people hadn’t heard of a year ago, hit a $29.3 billion valuation with $1.2 billion in annual revenue. The Model Context Protocol (MCP), an open standard for connecting AI to tools, reached 97 million monthly SDK downloads and 5,800 community servers.

Forty-one percent of all production code is now AI-generated. METR published research showing AI task capability doubles approximately every seven months. Isomorphic Labs released what researchers are calling “AlphaFold 4,” cutting drug discovery timelines by 70%.

The EU AI Act moved from theoretical future concern to active enforcement, with real fines for non-compliance. Reasoning models like o4-mini scored 93.4% on competition math. Claude’s API costs dropped 67% while its performance improved across every benchmark.

All of this. Six months.

Two Things Worth Paying Attention To

Underneath the model releases and valuation headlines, two things happened that I think will outlast all of them. Not because they represent some technical breakthrough. Because they represent something more fundamental: convergence.

The first is OpenClaw. The second is AutoResearch.

Neither of these is a new model. Neither required a billion-dollar GPU cluster. Neither came from a research lab with a hundred PhDs. And yet, when I look at where AI becomes useful (not impressive, useful), these two projects tell a bigger story than any frontier model announcement.

OpenClaw: A Cron Job, a Markdown File, and a Messaging Gateway

OpenClaw is an open-source autonomous AI agent created by Peter Steinberger. On the surface, it’s straightforward: a bot that runs on your messaging apps (Telegram, WhatsApp, Discord) and can do things for you, using whatever LLM you choose.

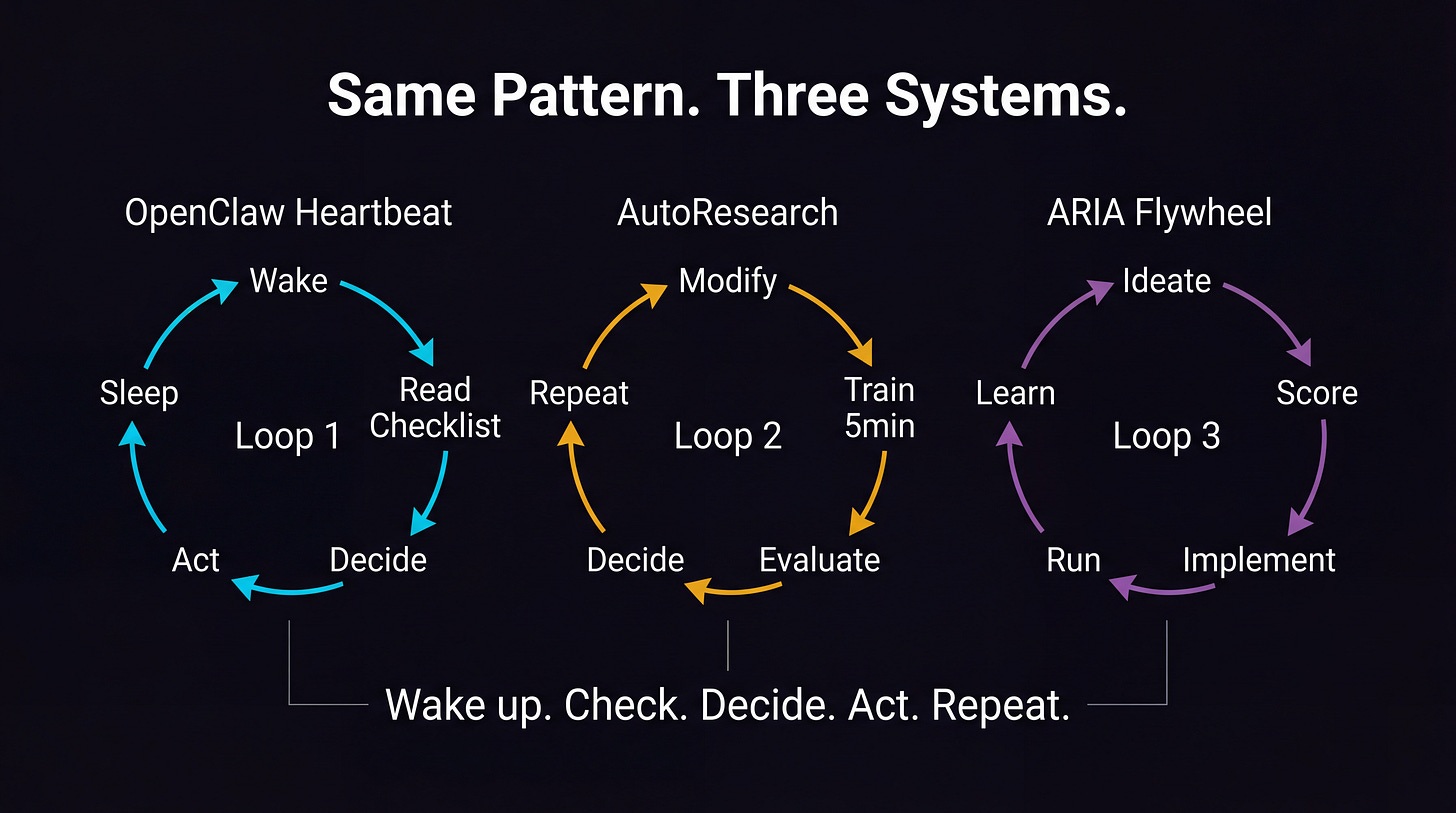

But the interesting part isn’t what it does. It’s the architecture. Five components: a gateway for routing messages, a brain for LLM calls, memory stored as plain Markdown files on disk, a plugin system of community skills, and the piece that makes it all work: the heartbeat.

The heartbeat is a cron job. Every thirty minutes, it wakes the agent up. The agent reads a checklist (a Markdown file called HEARTBEAT.md). It decides: does anything need my attention right now? If yes, it acts. If no, it responds with HEARTBEAT_OK, and the system suppresses the message. Nobody gets bothered.

That’s it. That’s the innovation. A timer, a checklist, and a decision loop.

And somehow, this simple pattern produced something that 5,700 community contributors have built skills for. Something that runs 24/7 on a cheap server, checking your inbox, monitoring your deployments, summarizing your feeds, following up on tasks you forgot about.

The reason it works isn’t the LLM. The LLM is a commodity now. The reason it works is that someone composed a handful of simple, well-understood components (cron scheduling, markdown persistence, messaging APIs, a ReAct reasoning loop) into a system that compounds over time.

I wrote about this pattern six months ago in The Context Graph. The shift from systems of record to systems of agents. Where the value isn’t in storing data but in capturing the reasoning behind decisions. OpenClaw’s memory is exactly that: plain text files that grow richer with every interaction, every heartbeat, every decision the agent makes.

AutoResearch: 630 Lines and a Five-Minute Loop

Andrej Karpathy released AutoResearch in March 2026. It’s a 630-line Python script. One file. It does one thing: lets an AI agent run autonomous machine learning experiments on a single GPU.

The loop is almost comically simple. The agent modifies a training file. It trains a model for five minutes. It checks the validation metric. It decides what to try next. It repeats. You go to sleep, and by morning, it’s run a hundred experiments.

The repository hit 8,000 GitHub stars in days.

“The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement.” That’s Karpathy’s description. Not a research paper. Not a framework with 47 dependencies. A single file and a clear loop.

What strikes me about AutoResearch isn’t the code. It’s the philosophy. Karpathy stripped everything down to the essential pattern: try, measure, learn, repeat. No human in the loop. No complex orchestration. Just a tight cycle running all night.

I’ve been running a version of this pattern for months. ARIA, my autonomous research system, operates on the same principle: a flywheel with 14 possible actions, scoring every idea on five dimensions, routing tasks to the right model (fast models for validation, capable models for creative work, the best model for code). Over 5000 sessions. 100 active ideas. 250 completed experiments with real data, now.

AutoResearch makes this accessible to anyone with a GPU and a Python environment. That matters.

The Pattern Underneath

Here’s what I want you to see. OpenClaw’s heartbeat and AutoResearch’s training loop are the same pattern. Wake up. Check the state. Decide what to do. Act. Go back to sleep.

This isn’t a new idea. Reinforcement learning has been doing this for decades. Control theory before that. What’s new is that the “decide what to do” step is now handled by language models that are good enough, cheap enough, and fast enough to make the pattern practical for everyday problems.

Six months ago, the pieces existed independently. Better reasoning models. Cheaper inference. Tool use through MCP. Persistent memory. Open-source skills. Each one was impressive on its own, and each one generated its own hype cycle.

What OpenClaw and AutoResearch show is what happens when you stop admiring the pieces and start composing them. A cron job plus a language model plus markdown files equals a personal agent that never sleeps. A training script plus an LLM loop equals a research assistant that runs a hundred experiments overnight.

The convergence isn’t about any single technology. It’s about composition. Small, proven components assembled into systems that compound.

I wrote about this in Your AI Strategy Should Be 1,000 Small Bets. The thesis was that bottom-up experimentation beats top-down transformation. That the real bottlenecks aren’t technical (access, permission, and culture are what hold people back). That when you remove friction and let people experiment, 480 participants will generate 40 solutions you never planned for.

What I didn’t anticipate was how fast the bets would start converging. The community skills in OpenClaw didn’t come from a product roadmap. They came from 5,700 people scratching their own itches. AutoResearch didn’t come from a funded research program. It came from one person who wanted his GPU to be useful while he slept.

The Builder’s Moment

Six months ago, I wrote about the 1:N effect: one person with AI collaborators producing output that used to require a team. That was true then. It’s more true now, but the nature of it has shifted.

It’s not just that the tools are faster. It’s that they’re composable. You can wire a heartbeat loop to a research agent to a memory system to a messaging gateway, and the whole thing runs while you’re having dinner with your family. Not because any one component is magical. Because the interfaces between them finally work.

MCP gave us the USB-C for AI connections. Reasoning models gave us agents that can make decisions, not just generate text. Cost compression made it feasible to run these loops continuously instead of rationing every API call. Open source made the building blocks available to everyone.

These aren’t things that happened because someone published a breakthrough paper. They happened because a thousand small bets, made by thousands of people working independently, started to rhyme.

Still Each Other

Here’s where I come back to geohot. And to the thing I wrote six months ago.

The people building these systems aren’t the ones panicking on LinkedIn. They’re not worried about falling behind. They’re too busy running loops. Small, patient, compounding loops. Building something, measuring it, learning from it, and sharing what they found.

I came across a piece on curiosity-driven self-education right before publishing this, and it stopped me. The research describes how people who teach themselves through curiosity develop what psychologists call “peripheral vision for problems.” They notice edges, context, contributing factors. They sit with confusion instead of reaching for predetermined frameworks. That’s the builder’s temperament. That’s the person running loops at midnight, not the person doom-scrolling LinkedIn at noon.

Karpathy open-sourced AutoResearch under an MIT license. Steinberger and 5,700 contributors built OpenClaw’s skill library in public. The MCP specification was donated to the Linux Foundation. These aren’t competitive moves. They’re acts of generosity that happen to also be good engineering.

The anxiety is misplaced. The future doesn’t belong to whoever runs the most agents. It belongs to whoever builds the tightest loops, and then shares them.

Six months ago, I wrote that in an age of AI, our greatest tool is still each other. That professionals trust their networks over algorithms. That human judgment, trust-building, and relationship quality remain competitive advantages that technology can’t replace.

Nothing that happened in the last six months changed that. If anything, the convergence reinforced it. The most important AI systems being built right now aren’t the ones with the biggest parameter counts or the highest benchmark scores. They’re the ones that amplify what people already do well: experiment, share, learn, and build on each other’s work.

The next six months will be faster. The models will be better. The costs will be lower.

The hype will be louder.

But the pattern won’t change. Small bets. Tight loops. Shared generously. Compounding quietly. That’s the convergence.

And it’s just getting started.