Sunday Deep Dive: The Specialists Are Coming for the Generalists

Every Sunday, I pick one paper or release that’s genuinely worth your time, break it apart, and tell you why it matters. No hype. No summaries of summaries. Just the idea, explained.

The Headline

The loud story this quarter is that an open-weights Chinese model beat Claude Opus on a real coding benchmark. Every tech newsletter ran it.

The quiet story is that the specialists are coming for the generalists, and they’re small enough to run on your laptop.

Hamel Husain, who has trained more applied LLM engineers than anyone I know, put the case in one sentence:

“Open models aren’t always better, but the more narrow your task, the more open models will shine because you can fine tune that model and really differentiate them.”

This week’s deep dive is about the quiet story.

A Quick DeepSeek Refresher

Set the table briefly, because the rest of the post depends on it.

January 2025. DeepSeek-R1 ships. Reasoning model from a Chinese lab matching OpenAI’s o1, open weights, training cost an order of magnitude lower than the closed labs had implied was possible. NVIDIA dropped that day, the market panicked, and the financial story made the front page.

The financial story was the wrong story.

The real story was the permission slip. Every other open-weights lab, Qwen, GLM, MiniMax, Mistral, the Llama group, took the gloves off. By April 2026, that permission slip is showing up everywhere. And the strangest thing about it is that the most interesting consequence isn’t at the frontier.

The Loud Story (Quick Flyby)

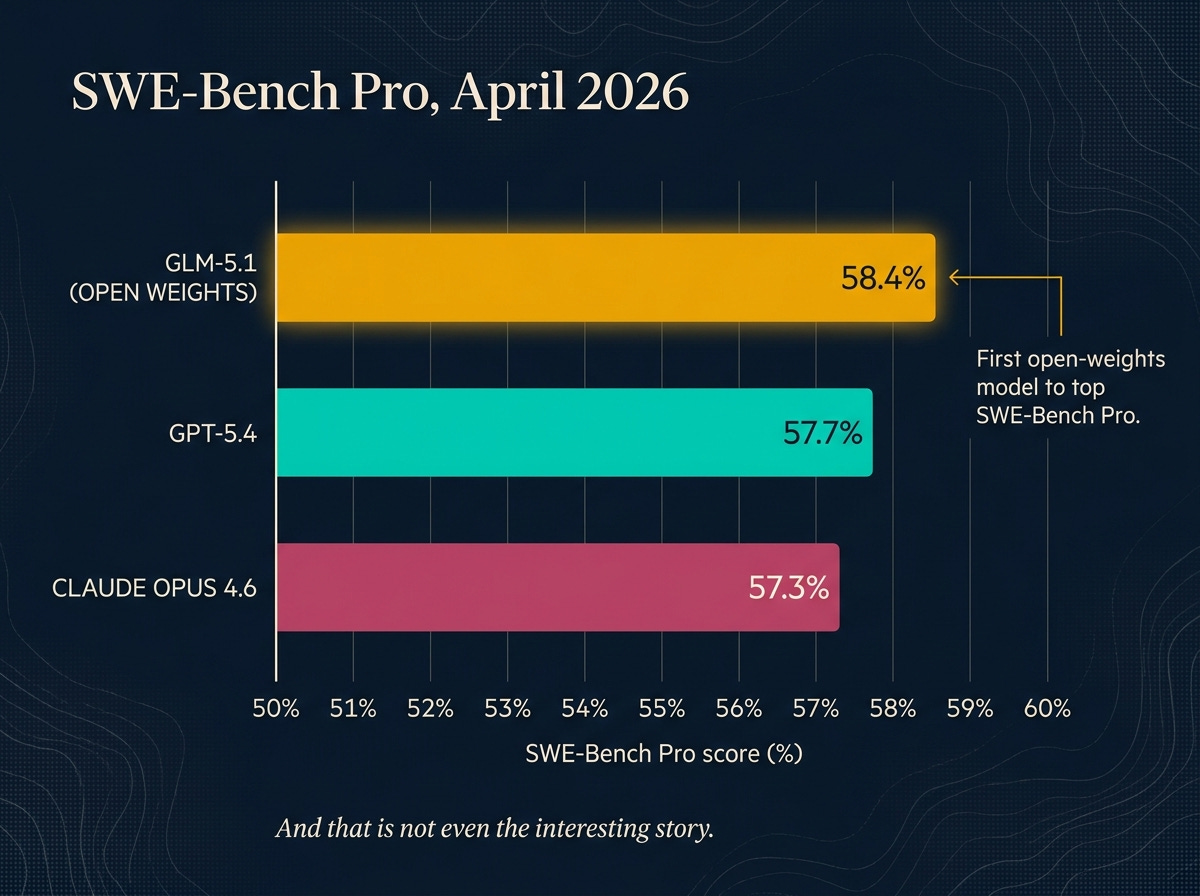

The benchmark headline is real. Z.ai’s GLM-5.1 beats GPT-5.4 and Claude Opus 4.6 on SWE-Bench Pro, the most-cited real-world coding benchmark in the field.

That is, by any reasonable measure, “open caught up to closed at the frontier on coding.” It’s a categorical change from where we were a year ago.

And it isn’t a one-off. The release cadence over the two weeks I spent finishing this post:

April 7 — GLM-5.1 lands the SWE-Bench Pro number above.

April 12 — MiniMax M2.7 drops open weights on HuggingFace. 229B MoE, 56% on SWE-Pro.

April 21 — Kimi K2.6 ships GA with 12-hour autonomous coding sessions and 300-agent swarms.

April 22 — Qwen 3.6-27B, a dense 27B model, beats the previous-generation 397B MoE on coding benchmarks.

April 24 — DeepSeek V4 preview drops in two sizes with explicit Claude Code integration. I’ve been running V4 in my own coding agent as a Claude swap-in on real tasks. Results hold up.

Five frontier-grade open releases in under three weeks. If you only read the GLM headline, you missed the cadence.

The interesting story isn’t even at the top of the leaderboard. It’s one tier down.

Meet the Small Models

Two anchors. Pay attention to the second number.

Gemma 4 26B (Google, April 2026). 26 billion parameters total, but only 3.8 billion active per query. Runs on a 16GB consumer GPU, an Apple Silicon Mac with 32GB of RAM, or natively on an iPhone offline (the smaller E2B variant). It got six separate top-300 Hacker News threads in two weeks. One Reddit operator wrote: “Gemma 4 just casually destroyed every model on our leaderboard except Opus 4.6 and GPT-5.2. 31B params, $0.20/run.”

Qwen 3.6 35B-A3B (Alibaba, April 2026). 35 billion parameters total, 3 billion active per query. Apache 2.0 license. The release HN thread, with 1,263 points, was titled: “Qwen3.6-35B-A3B on my laptop drew a better pelican than Claude Opus 4.7.”

The pelican test is a Simon Willison thing. He gives every new model the same prompt: “draw a pelican riding a bicycle, in SVG.” It’s been a useful informal benchmark for the gap between hype and capability. A 35B-total, 3B-active model running on someone’s laptop drawing a better pelican than the trillion-dollar closed-API offering is the kind of moment people remember.

Neither of these models is trying to be the frontier. They’re not chasing GLM-5.1 on SWE-Bench Pro. They’re the new floor.

Why They’re Small (the Architecture)

The number that matters in both descriptions above is “active parameters.” Total params is the file size on disk. Active params is the cost per query. They’re radically different now, and the reason is an architecture called Mixture of Experts.

Here’s the analogy.

Imagine a hospital with 100 specialists on staff. A surgeon. An anesthesiologist. A cardiologist. A radiologist. Ninety-six others. Most patients only ever see three of them on a given day. The surgeon for the operation, the anesthesiologist for the procedure, the recovery nurse afterward. The other 97 don’t show up.

The hospital is “100 doctors big.” But the cost-per-patient is “3 doctors big.”

That’s MoE. Mixture of Experts. The model has a lot of total parameters. But on any given prompt, the network routes through only a small fraction of them. You get the breadth that comes from training a larger network without paying the per-token cost of running it.

Quick glossary

MoE (Mixture of Experts). Model architecture where only a subset of parameters activate per input. Not new (research goes back to the 1990s), but only recently practical at frontier scale.

Active parameters. The ones that actually compute on a given prompt. The cost number that matters.

Total parameters. All the weights stored on disk. The file-size number.

Quantization. Rounding model weights to lower-precision numbers (e.g. 16-bit to 4-bit) to fit them in less memory. TurboQuant, the Google paper from March 2026, was the breakthrough on this for inference-time KV caches. (I covered it in a previous Sunday Deep Dive.)

This is the structural answer to the obvious question: how does a 3B-active model match Claude Opus on real tasks?

It doesn’t. It matches Claude Opus on the slice the model was tuned for. That’s the bridge to the next section.

What They’re Actually Good At

The honest version of the small-model story is task-specific.

Coding (in-domain). Qwen 3.6 35B-A3B beats much larger models on SWE-Bench Pro. The pelican test is the cocktail-party version; the SWE-Bench number is the engineer-meeting version.

On-device and offline. Gemma 4 26B running natively on an iPhone with no API calls and no monthly fees is the privacy story. For applications where data cannot leave the device (regulated industries, personal assistants, anything HIPAA-adjacent), this is no longer aspirational.

Long-context retrieval. TurboQuant compression makes 128K context windows manageable on consumer GPUs. Reading whole codebases, whole legal briefs, whole patient charts on a workstation is now possible without renting cloud time.

Multimodal vision-language. Qwen 3.6 matches Claude Sonnet 4.5 on vision-language tasks despite being roughly one-tenth the size.

What they are not good at, because this is where the post earns trust:

Long-horizon agentic reliability. A 50-step coding task where the model has to maintain context, recover from errors, and not silently give up. Closed frontier models still lead. The same Ahmad Osman thread that broke the GLM-5.1 SWE-Bench Pro number also flagged that GLM-5.1’s 1,700-step autonomous run claim requires verification loops you have to build yourself. Not plug-and-play.

Voice-sensitive long-form writing. The essay you’re reading was drafted by Claude Opus, not Qwen 3.6. The taste-and-rhythm gap on long-form prose is real and won’t close fast.

Adversarial robustness. When inputs are hostile (prompt injection, weird user behavior, adversarial test data), closed labs have invested more in the failure modes.

But notice what’s on each list. The “not good at” list is exactly the cases where you’d route to a generalist anyway. For everything else, the specialization argument starts to look obvious.

The Real Story: Domain Specialists

This is where the post stops being about chatbot LLMs and gets to the real architecture of the next year.

Evo 2 is not a chatbot. It doesn’t talk. It understands DNA, specifically 8,000-letter genomic windows, the building blocks of every cancer mutation in the public ClinVar database. Open weights. The 7B variant runs on a single workstation GPU.

Earlier this week I showed what happens when you actually point Evo 2 at a real clinical problem. Six cancer genes, 4,471 variants, one workstation in a closet, one weekend, and the model beats AlphaMissense, the specialist tool clinicians actually use, on coding variants. And it extends into noncoding territory where AlphaMissense produces no score at all.

The point isn’t that genomics is special. The point is that this pattern, open weights plus workstation hardware plus a domain the model was specifically trained for, is repeating across every field. MedGemma for biomedical text. DeepSeek-Coder for code. AlphaFold 3 for protein structure. The specialists are showing up faster than the generalists can absorb their territory.

Which raises the operator question: how do you actually use them?