Sunday Deep Dive: The Math Trick That Cuts LLM Memory by 6x

Every Sunday, I pick one paper or release that’s genuinely worth your time, break it apart, and tell you why it matters. No hype. No summaries of summaries. Just the idea, explained.

Google just published TurboQuant, a compression technique that shrinks the memory your LLM uses during inference by 6x. No retraining. No accuracy loss. You just apply it.

If you run models at scale, or you’re watching your inference costs climb, this is the blog to read this week.

The Problem Nobody Talks About

When people talk about making LLMs smaller, they usually mean compressing the model itself. The weights. The file you download.

But there’s a different memory problem that hits at runtime, one that determines how many users your GPU can actually serve at once.

Every time a model processes a conversation, it keeps a running record of everything it’s seen so far. Think of it like a researcher’s notes. Each sentence the model reads, it jots down two things: what this piece of information is (the “key”) and what it contains (the “value”). The model needs these notes to connect ideas across a long conversation, to remember what was said on page one when it’s reading page fifty.

This running notebook is called the key-value cache, and it grows with every word. A short chat? Small notebook. A 128,000-token agent session analyzing a codebase? The notebook alone can consume more GPU memory than the entire model.

That’s the hidden bottleneck. Not the model. The conversation history. It’s why your AI agent slows down on long tasks, why inference providers charge more for longer contexts, and why “just use a bigger context window” has been impractical for most teams.

The Idea

TurboQuant compresses that notebook down to a fraction of its size. Here’s the core insight, and it’s surprisingly intuitive.

The old approach and why it’s hard

The standard way to compress data is called quantization. You take a precise number (stored with 16 bits of detail) and round it to fit in a smaller container (say, 4 bits). Like rounding $47.83 to “about $50.” You lose some precision, but you save a lot of storage.

The catch: different parts of the model produce values in completely different ranges. One layer’s numbers might span 0 to 100. Another’s might span -0.5 to 0.5. Before you can round anything, you need to measure each range, then scale everything to fit. That measurement and scaling step (normalization) itself eats memory and compute, which chips away at the savings you were after.

TurboQuant’s trick: change the coordinate system

Instead of trying to normalize all those different ranges, TurboQuant changes how it represents the data entirely.

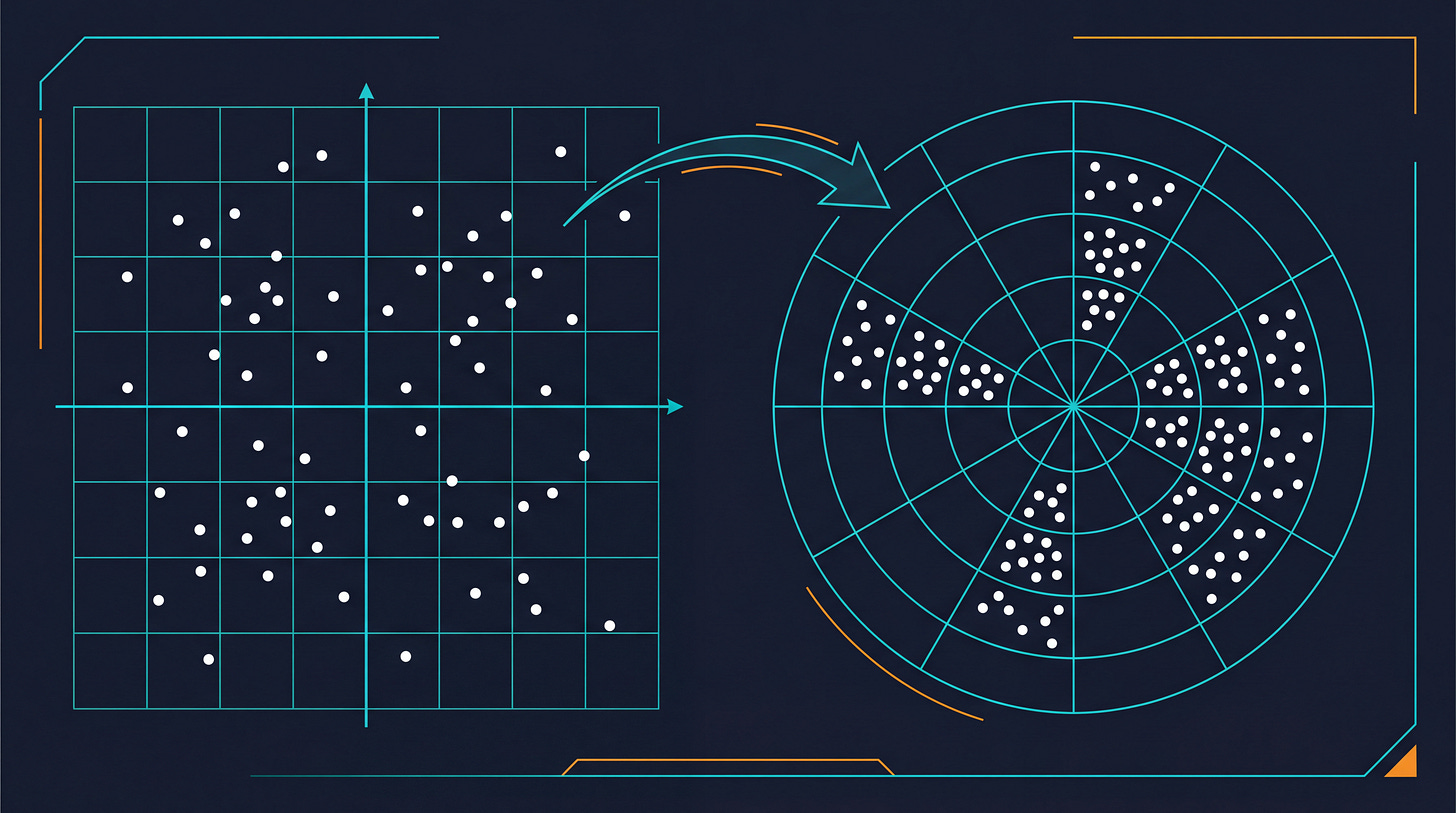

Here’s the analogy. Say you’re giving someone directions. You could say “Go 3 blocks East, then 4 blocks North.” Two separate numbers, each with its own range to worry about. Or you could say “Go 5 blocks at 37 degrees.” Same destination. But that angle, 37 degrees, lives on a circle. And circles have a built-in boundary: 0 to 360 degrees. Always. No matter what data you’re compressing.

That’s what TurboQuant does. It converts the model’s data into this circular representation (technically, polar coordinates). Because the boundaries are fixed, it can skip the expensive normalization step entirely. No measuring ranges. No per-layer calibration. No tuning for specific datasets. The paper calls this “data-oblivious,” meaning it works on any model without customization.

There’s a second stage that adds a lightweight error correction, basically a plus-or-minus adjustment per value, to keep accuracy intact. The overhead is negligible.

The Numbers

6x memory reduction in the key-value cache with zero accuracy loss 3-bit precision per entry (down from 16-bit), no retraining required 8x faster attention computation on NVIDIA H100 GPUs Tested across five benchmark suites (LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, L-Eval) on Gemma and Mistral models Outperforms existing approaches on recall metrics The “no retraining” part is what separates this from most compression research. Typically, you compress a model and then spend days fine-tuning it to recover the accuracy you lost. TurboQuant skips that step. You apply it at inference time. That’s the difference between a research result and something you can actually deploy.

This is where the free preview ends. Below the fold: what this means for your infrastructure budgets, three decisions this should change for teams running AI at scale, and the pricing signals to watch for from inference providers.