Two Gaps, Not One

Introducing: Builder-Leader: The AI Exoskeleton That Crosses the Gap (My Book)

Patel ran a piece on Decoder this week titled:

“The people do not yearn for automation.”

It’s good. He names a way of seeing the world he calls “software brain”: viewing everything as databases and loops you can run with code. He argues AI has turbocharged that mindset, and that the rest of the country is reacting to it the way you’d expect.

With a hard no.

Then, partway through, he reaches for an example of what software-brained people actually do with their days. He says they pay thousands of dollars a month to set up swarms of OpenClaw agents.

That’s me. I run OpenClaw.

So before anything else: yes. I see opportunities for automation. I write thousands of lines of code. I sit at a laptop and tell agents what to build, and a lot of the time they build it. Patel describes the type accurately.

The type is also relevant for a different reason than the one he’s writing about, and that difference is the entire point of this post.

I’m going to argue Patel is right about the mood, right about the cultural backlash, and pointing at the wrong gap for any executive trying to figure out what to do this quarter.

What Patel gets right

Software brain is a real thing and the cultural rejection of it is a real thing.

The smart-home anecdote is the one I keep coming back to. Apple, Google, and Amazon have spent more than a decade and many billions of dollars trying to make ordinary people care about home automation. Most ordinary people still don’t. They will buy a smart bulb and forget about it. They do not want to instrument their lives.

The polling tells the same story. AI’s favorability is below ICE in some polls. Gen Z’s hopefulness about AI dropped from a bad number last year to a worse one this year. Anger is up. The political violence around data centers is real and ugly and should embarrass anyone in this industry who thinks better marketing fixes it. Patel’s flattening line is the one that does the work:

“That’s why people hate AI. It flattens them.”

He’s also right that the tech industry’s “we just need to tell our story better” answer is delusional. People are using these tools every day. ChatGPT has nine hundred million weekly users. They know what it feels like.

You cannot advertise people out of their own experience.

The piece engages most generously when Patel paraphrases Ezra Klein on Silicon Valley AI types racing to make themselves “legible to the AI.” Feeding the model their files, calendar, email, messages, building persistent memory of their preferences. Patel calls that a doomed ask of regular people, and he’s right. Regular people will not flatten themselves into a database to please an LLM.

That is not the audience the book I’m writing is for.

The other gap

There is a second gap running underneath the cultural one, and Patel’s piece makes it harder to see, not easier.

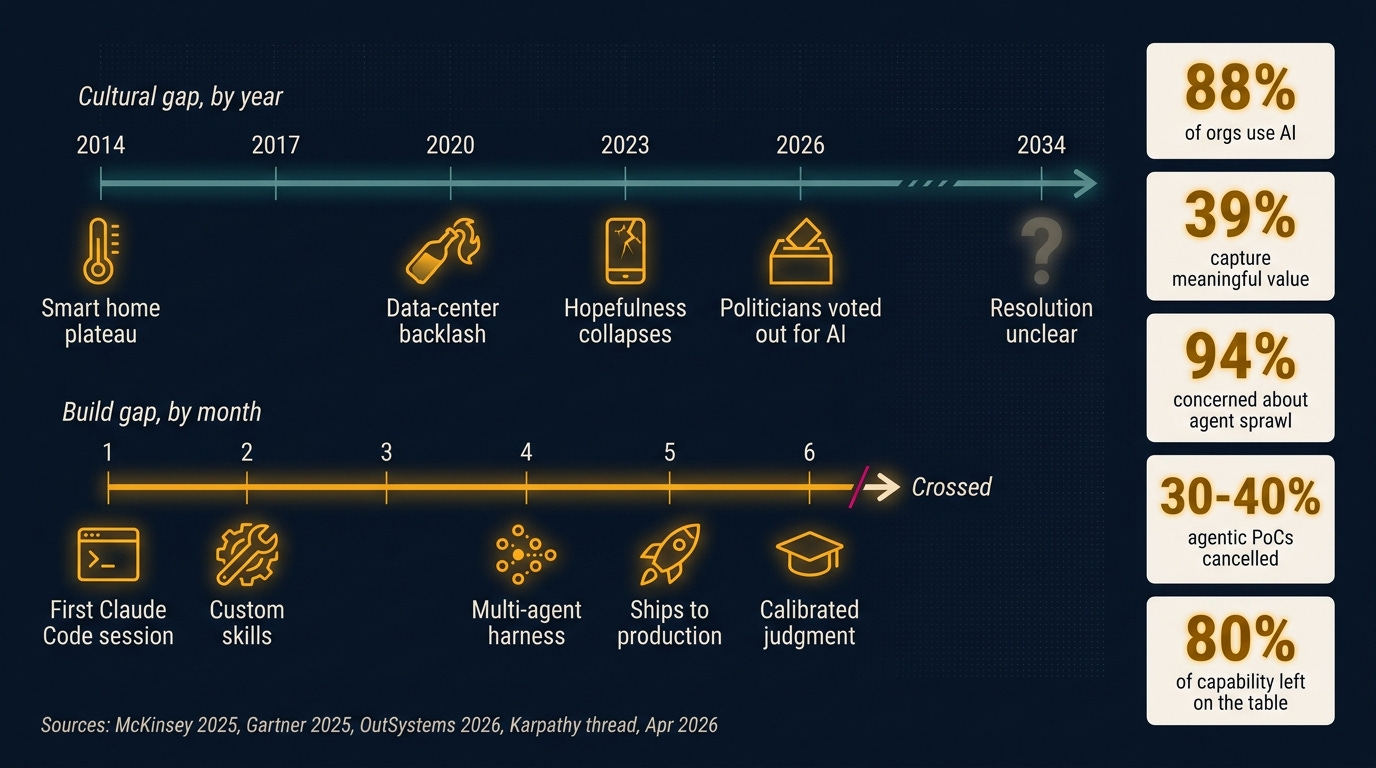

Andrej Karpathy named it on April 9 of this year in a tweet that got roughly twenty thousand likes by the end of the week. Two groups, he said, speaking past each other about AI. Not skeptics versus believers. Not chatbots versus AGI.

People who have built something with agentic systems on one side. People who have read about them, used the free tier, or watched a demo on the other.

The reply thread surfaced a third group hiding inside the second. Doodlestein called them “people magnifying power with custom tooling, skills, workflows, swarms.” Another reply landed harder: most people in Karpathy’s second group are leaving eighty percent of the capability on the table without knowing it.

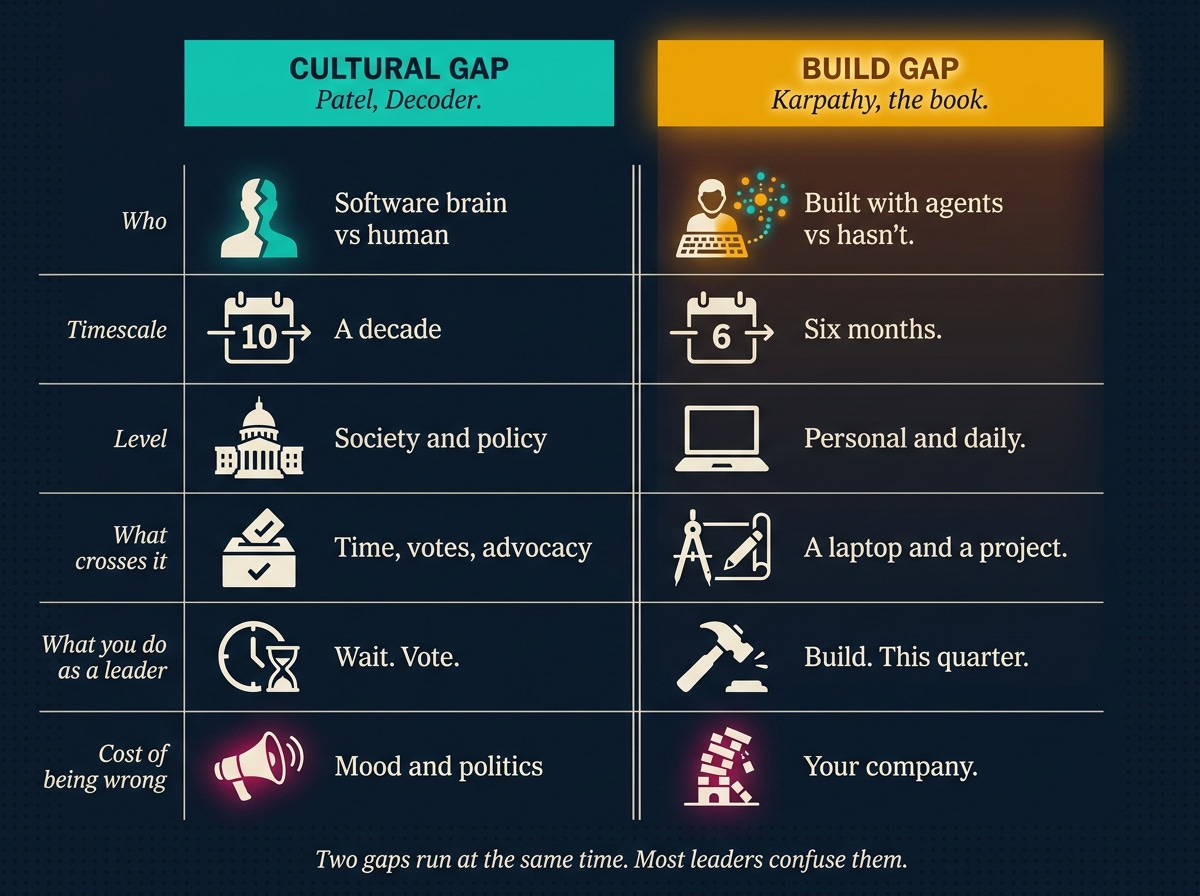

Patel and Karpathy are both right. They are also describing different gaps.

Patel’s gap: software brain versus everyone else. Karpathy’s gap: built with agents versus hasn’t.

These are not the same line.

Why they look like the same gap

Both have excited tech people on one side. Both have a population on the other side that finds AI underwhelming or hostile. The merge is easy. The merge is also the trap, because Patel’s piece makes the merge feel responsible.

Here is the trap as a sequence:

Read the Decoder piece.

Conclude AI is mostly hype because regular people don’t like it.

Skip the personal-build step yourself.

Approve the twenty-million-dollar platform contract someone else recommended.

Six months later you are in the McKinsey eighty-eight-percent failure rate, looking for someone to blame.

The cultural gap and the build gap can both be real. One of them can still be the one that decides which side of the next five years your company lands on.

The cultural gap is a decade. The build gap is six months.

Side by side

The cultural gap is real and probably deepening. The build gap is also real and decides whether your org ships agentic systems to production or stalls.

You can be right about the first and wrong about the second at the same time.

Most leaders currently are.

The executive failure mode

“Regular people don’t like AI, therefore AI is overhyped, therefore I don’t have to build.”

That is the most expensive sentence in enterprise AI right now.

McKinsey’s 2025 State of AI says eighty-eight percent of organizations report using AI and thirty-nine percent report capturing meaningful value from it. Gartner says thirty to forty percent of agentic-AI proofs-of-concept get cancelled. An OutSystems report this April, on a survey of nineteen hundred IT leaders, found ninety-four percent worried about agent sprawl across fragmented enterprise systems.

None of those numbers are stories about AI being overhyped. They are stories about leaders trying to deploy what they’ve never personally operated.

The build gap shows up as the failure rate.

Even Gary Marcus, the canonical LLM skeptic, conceded in an April Substack post that Claude Code is the single biggest advance in AI since the LLM, and that it is, quote, not a pure LLM.

Hostile witness. The model is no longer the question. The thing built around the model is.

What the build gap looks like from inside

One paragraph, not a tour.

Karpathy runs an autoresearch loop while he sleeps and reads the output in the morning. Reddit r/ClaudeAI has a twelve-thousand-upvote thread of operators trading folk-culture optimizations they call “caveman tokens.” The word harness hardened into a noun in mainstream developer discourse this quarter. Doodlestein’s third group is real and it is bigger every month: people running custom tooling, skills, agents, swarms.

Yes, those are software-brain people. Patel is right about that. They are also the people whose calibration on what AI can do is correct, because they hold the data the rest of the debate is being conducted without.

If you are a senior leader, your job is not to become one of them. Your job is to know what one of them sees, well enough to direct the work and call bullshit when someone tries to sell you a slide deck.

What Patel is missing about leaders

Patel is writing about consumers and citizens. Both are downstream of policy, mood, and the social contract. Fair game for the cultural critique.

The reader I am writing for is not a consumer or a citizen in this context. They are an executive who has to allocate a budget on Tuesday.

They cannot wait for the cultural gap to close, because the cultural gap is going to be ugly for a decade.

They also cannot reason their way past the build gap, because the build gap is reality-shaped, not narrative-shaped. The eighty-eight-percent failure rate does not care how anyone feels about software brain. It cares whether anyone senior in the org has personally driven a harness and shipped something with it.

This is the move Patel doesn’t make and shouldn’t be expected to make.

He is diagnosing the mood. The book diagnoses the move.

The book in two sentences

Builder-Leader: The AI Exoskeleton That Crosses the Gap is for executives who want to be on the right side of the build gap by Q3.

It does not require you to become an engineer, abandon your polish, or volunteer to be flattened into a database. It requires you to direct a harness, every day for six months, until you can tell what good looks like from the inside.

This is my pretty quiet, nonchalant attempt at an announcement, louder ones, forthcoming…

Preorder Builder-Leader on the book site.

Close

Patel is going to keep being right about the mood. AI will probably get less popular before it gets more.

The cultural gap is a decade.

The build gap is six months.

It is not subject to a vote. It does not move on a news cycle. It is the difference between leaders who can read an AI strategy proposal and tell whether it is real, and leaders who can’t.

By 2027 there will not be many of the second kind left in senior roles at companies that survive the decade.

Patel diagnosed the mood. The book diagnoses the move.

You can be right about both at once. You’d better be.