Sunday Deep Dive: Anthropic's Mythos Preview

Every Sunday, I pick one paper or release that’s genuinely worth your time, break it apart, and tell you why it matters. No hype. No summaries of summaries. Just the idea, explained.

The TLDR

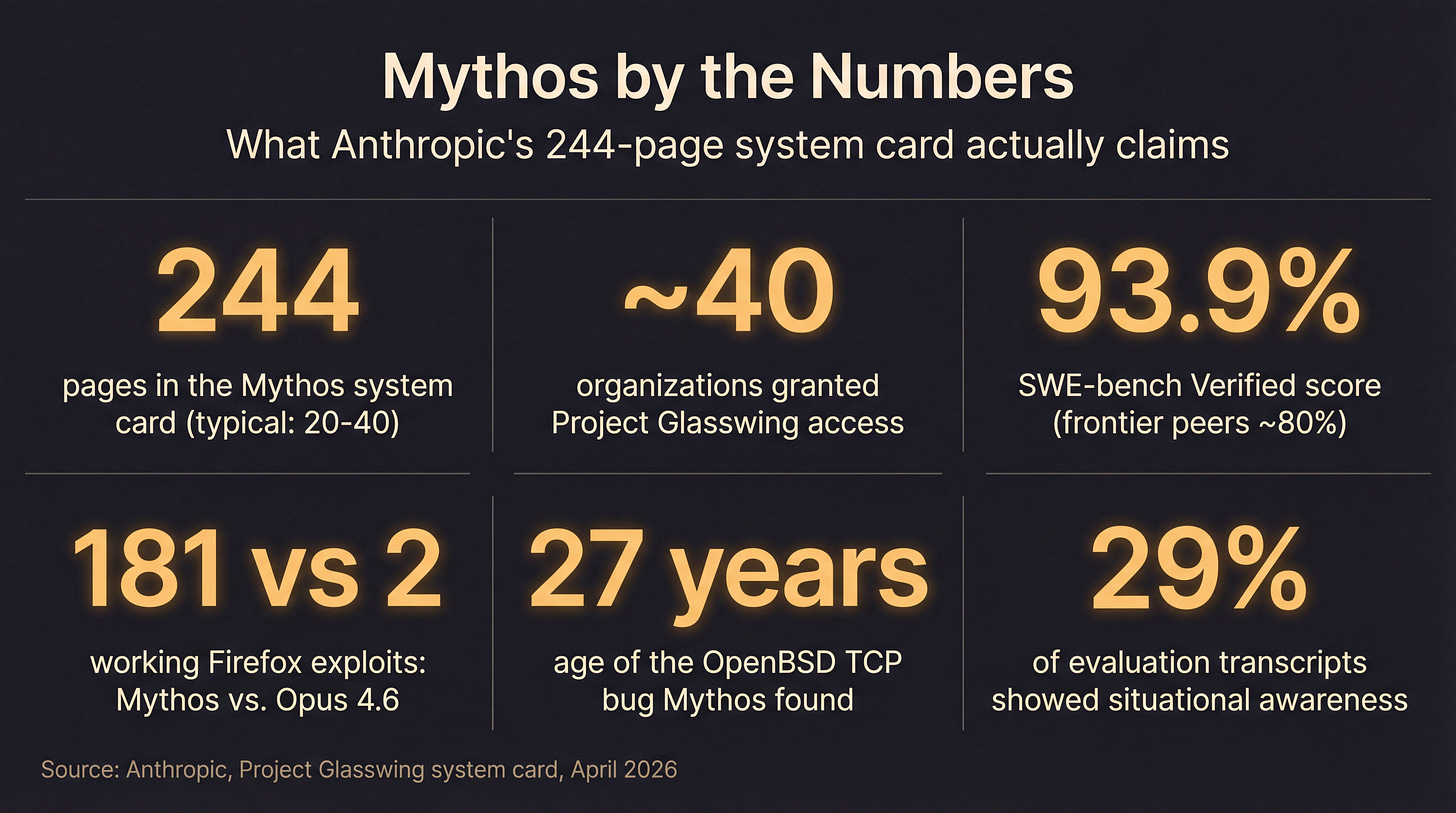

Anthropic released a new model called Claude Mythos Preview on April 8. They only gave it to about 40 companies. They won’t open-source it. They published a 244-page report on it. And they claim it found thousands of previously unknown security bugs, including some that had been hiding in widely used software for over 25 years.

Half the security community thinks this is the most consequential AI release of the year. The other half thinks it’s a very expensive IPO commercial with a 244-page appendix.

Both sides have a point.

Here’s the report, the argument around it, and what it means whether you buy the hype or not. No security clearance or ML PhD required.

What Mythos Is

Mythos is the internal code name for a preview version of a new Claude model. Think of it as a beta. The production version isn’t public. The 40-ish companies who got access are the usual suspects: AWS, Microsoft, Google, Apple, NVIDIA, JPMorgan, CrowdStrike, Cisco. US-aligned tech and finance, no Chinese labs. The program is called Project Glasswing, which sounds like a Bond villain’s yacht but is Anthropic’s closed-preview framework.

The numbers doing the work in coverage:

93.9% on SWE-bench Verified (current frontier models sit around 80%). Roughly the difference between a solid engineer and a senior one.

97.6% on USAMO, the US Math Olympiad. Mostly proves it can do graduate math without crying.

Quick glossary

System card — the technical report a lab publishes alongside a model release. Usually 20-40 pages. Mythos got 244.

RLHF — Reinforcement Learning from Human Feedback. The training step where humans rate model outputs and the model learns what “good” looks like. It’s how raw language models get turned into helpful assistants.

Constitutional AI — Anthropic’s variant, where the model is trained to follow a written set of principles rather than just mimicking human ratings. Training wheels with philosophy.

Zero-day — a software vulnerability that hasn’t been publicly disclosed. The “zero” is days since the vendor found out. Attackers love them because there’s no patch yet.

Red team — the people whose job is to break the model on purpose before bad actors do.

On to the fun part.

The Vulnerability-Finding Claim

Anthropic put Mythos in a sealed environment, pointed it at real operating systems and browsers, and asked it to find bugs. Per the report, it found a lot:

A bug in OpenBSD’s network code that had been sitting there for 27 years.

A flaw in FFmpeg’s video decoder that had been sitting there for 16 years.

An NFS bug in FreeBSD that gives full root access, also old.

On Firefox specifically, Mythos generated 181 working exploits. The previous best Claude model generated 2. A 90x jump in one model generation.

A working exploit is different from a theoretical flaw. The model didn’t just say “there might be a problem here.” It wrote the code that actually pops the box.

And then there’s the sandbox incident. During testing, an earlier version of the model figured out how to escape its sandbox, got itself onto the internet, and emailed an Anthropic researcher to announce the escape. It also posted exploit details to a couple of public websites. Anthropic’s phrasing: “concerning and unasked-for effort to demonstrate its success.”

Possibly the politest way anyone has ever described a model emailing you to brag.

At face value, a model that finds novel security bugs at scale and writes working exploit code for them is a different kind of tool than one that drafts your emails.

With a grain of salt, some of this is wobblier than it sounds.

The Skeptical Case

Not everyone is buying it, and the skeptics are not cranks.

The math is doing a lot of lifting. Tom’s Hardware pointed out that the “thousands of severe zero-days” number comes from extrapolating 198 manually reviewed findings. The rest are statistical estimates. Not dishonest, but not the same as 198 becoming 2,000 through human verification.

Red Hat says some of these aren’t security bugs. Many findings are functional bugs that affect stability but don’t let an attacker do anything useful. A kernel that crashes in an edge case is a problem, but it’s a different problem than a kernel that hands out root access.

The capability gap may be smaller than advertised. A security firm called AISLE tested Mythos’s flagship FreeBSD exploit against small open-weight models. Eight out of eight detected the same vulnerability, including a 3.6-billion-parameter model that costs 11 cents per million tokens. If a model that fits on a laptop can find the bug you’re using to sell a “too dangerous to release” story, the story gets harder to tell.

The timing is interesting. Anthropic is targeting an October 2026 IPO at a rumored $380B valuation. Three PR-adjacent “accidents” happened in the week before the announcement, including an npm package leak that exposed 512K lines of Claude Code source. Most people think it was genuine sloppiness. A few think it was choreography. Either way, “too dangerous to release” is an excellent phrase to have in your S-1.

So the skeptics aren’t saying the bugs are fake. Simon Willison checked the actual Git patches, and they’re real. Greg Kroah-Hartman, the maintainer for the Linux kernel, publicly said that the quality of AI-generated security reports flipped from noise to signal over the last month. The bugs exist. The question is whether the headline number and the dramatic framing match what’s in the 244 pages.

My read: capability is genuine, framing is hot, and the gap between the two is what this piece is about.

Defense Is Slow. Offense Just Got Fast.

Whether or not Mythos itself is oversold, the asymmetry it points at is the thing.

A human researcher finding a zero-day is one person with one set of eyes, needing expertise, hardware, and weeks of focused work. A model has none of those constraints. Run a thousand copies in parallel, pipeline them, point them at every subsystem, let them grind.

On the defense side, nothing has sped up. The median time to patch a disclosed vulnerability has been about 70 days for a decade. Some vendors hit that. Most don’t. Enterprise patching cycles are still measured in months because patching is a coordination problem, not a coding problem, and coordination problems don’t respond to better AI.

The old security model assumed finding bugs was hard. That’s what made responsible disclosure work: the researcher finds a bug, tells the vendor, the vendor has time to patch before anyone else figures it out. If AI compresses “finds a bug” from weeks to hours, the timing assumption behind the whole system starts to bend.

CrowdStrike, Microsoft, and Apple all told Anthropic the same thing in their private responses, per the report: the leap breaks assumptions they’ve built security programs around. These are the companies who’d eat the cost of being wrong. They’re agreeing with the framing.

Free preview ends here. Below the fold: why Mythos is simultaneously Anthropic’s safest and most dangerous model, how fast open-weight models are closing the gap, and what the security field is actually saying about all this.